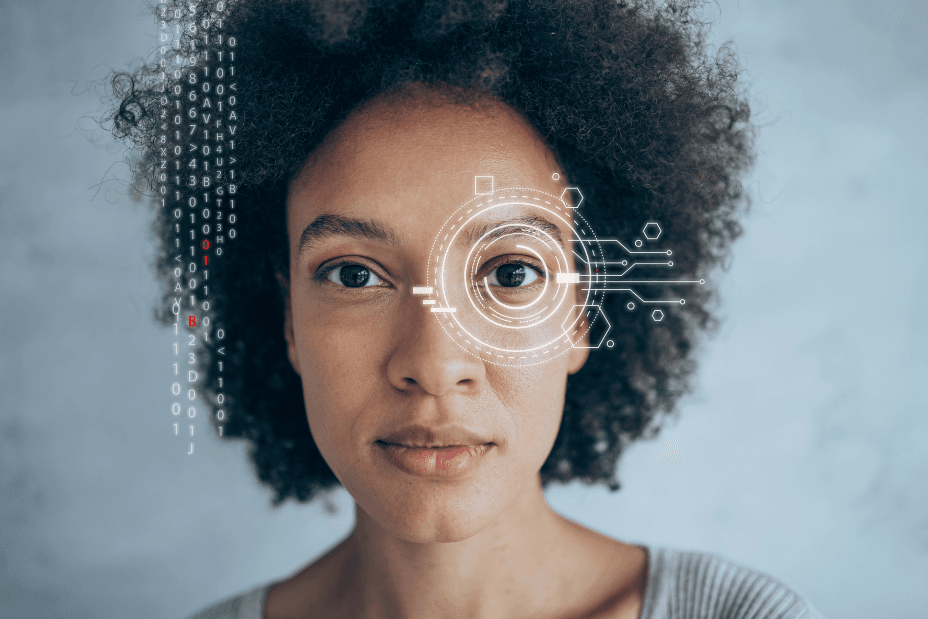

Fraud has entered a new phase. What was once opportunistic cybercrime is now operating as a coordinated, technology-driven system.

A new global threat assessment from Interpol warns that financial fraud has evolved into one of the fastest-growing forms of transnational organised crime, now sitting at the centre of criminal ecosystems enabled by AI, low-cost tools and global collaboration.

“AI has fundamentally changed the economics of fraud,” says Matthew Renirie, Co-Founder of Certified AI Access. “AI has fundamentally changed the economics of fraud.”

He warns that recent data points to a sharp acceleration:

- Deepfake fraud has increased more than tenfold globally in recent years, becoming one of the fastest-growing forms of identity fraud.

- A deepfake attempt now occurs every five minutes globally (Entrust)

- AI-driven fraud losses are projected to reach $40 billion annually by 2027 (Deloitte)

According to INTERPOL, AI-enhanced fraud is significantly more profitable than traditional methods, with emerging “agentic AI” systems capable of autonomously executing full fraud campaigns.

“Criminals are now operating like startups,” says Renirie. “They have tooling, infrastructure, distribution and optimisation. AI has collapsed the cost of deception and removed the traditional constraints that limited fraud.”

South Africa is already seeing early signs of this shift, Renirie notes: “The National Financial Ombud Scheme South Africa has reported a rise in AI-generated complaints entering formal systems, including highly sophisticated submissions with fabricated legal references and synthetic narratives,” he explains. “At the same time, local banks are warning of increasingly advanced impersonation scams, from voice cloning to AI-driven social engineering.”

“The reality is most organisations are still relying on traditional fraud and cybersecurity systems designed to detect incidents after they occur, but AI-enabled fraud is already operating in real time,” he adds.

A Critical Gap in Enterprise Technology Stacks

He says that the rise of AI-driven fraud is exposing a structural gap: deepfake and synthetic media detection is not embedded in most enterprise security stacks. “Traditional controls such as KYC, biometrics and call verification are increasingly being bypassed by AI-generated content.”

From Detection to Digital Private Security

In response, Certified AI Access, in partnership with Reality Defender, is deploying real-time, multi-modal detection technology across South Africa.

The platform detects AI-generated voice, video, image and text in real-time.

“As fraud has industrialised, the response must evolve,” says Renirie. “Just as South Africa built a private security industry to supplement policing, businesses now need a form of digital private security, a continuous, layer for AI-driven threats.”

A Defining Moment for Business and Regulation

He explains that INTERPOL’s findings show that fraud is now a systemic risk with implications for institutional trust, economic stability and national security.

“For South African organisations, the window to act is narrowing,” adds Renirie. “The absence of reported deepfake cases is not proof that it’s not happening, it’s proof that most organisations don’t yet have the capability to detect it.”

For more information, visit certifiedaiaccess.com and realitydefender.com.

#Fraud #Industrialises #INTERPOL #Warns #Global #Crime #Networks